AI-Enabled Expertise Discovery: The ExpertFinder Story

Newfire builds AI-enabled systems to solve real operational challenges for both our clients and our own engineering organization. One example is ExpertFinder, an internal platform designed to help teams quickly identify colleagues with relevant hands-on experience. By capturing short accounts of real project work and making them searchable across the company, ExpertFinder turns everyday engineering knowledge into something teams can reuse. Read on to learn what this recent example from Newfire’s practice reveals about applying AI to real engineering workflows and meet the team behind the build.

The Expertise Signal Problem

As engineering organizations scale, experience spreads across projects, time zones, and client environments. People learn quickly, solve hard problems, and move on. The organization gets stronger, but the knowledge becomes harder to reuse at speed. Real project experience isn’t packaged into something the company can reliably match against the next need.

One way to recognize this stage of growth is by the questions that start circulating across teams:

- “Who can help interview for X?”

- “Has anyone implemented Y in production?”

- “We need a quick sanity check from someone who’s done Z before.”

As Newfire grew from a startup into a hundreds-strong global engineering team, we began encountering the same challenge. A team would need someone with very specific, hands-on experience. We knew that expertise existed inside Newfire, but the reliable path was still “ask around until the right person appears.” That works when you’re small. At scale, it becomes expensive, costing not only money but also significant time, context switching, and repeated effort.

As engineers, we chose to treat this as what it is: a scaling problem worth solving the same way we would for a client. So, in early 2024, we set out to build a software tool, first to make the common “who can help with this?” discovery faster and more dependable.

Today, after some iteration and the inclusion of an LLM-powered conversational interface, it goes beyond finding names: it continuously turns day-to-day project experience into a shared, matchable signal so the organization can connect the right people to the right needs in minutes, with the context to act.

We could always solve it eventually. What we wanted was a way to make real project experience immediately usable for the next team, without adding process overhead.

Mykola Kovalchuk, Product Development Lead at Newfire

That system is Newfire’s AI-enabled expertise discovery platform and knowledge base, ExpertFinder.

What we built: ExpertFinder

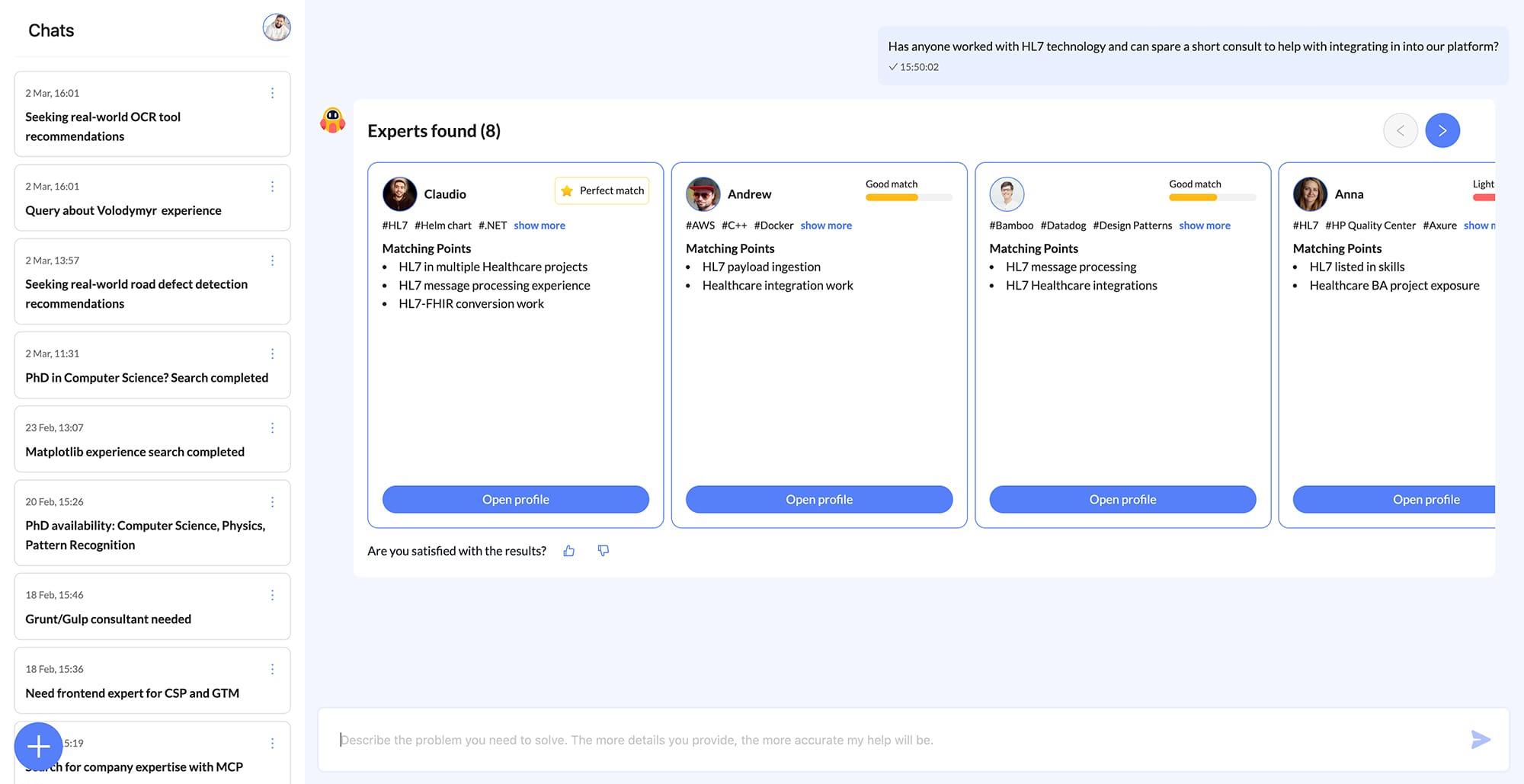

ExpertFinder is an internal platform that helps teams find colleagues with relevant hands-on experience. Instead of relying on static skill lists or resumes, it captures short descriptions of real project work: the problem someone solved, how they approached it, and the technologies involved. That context matters. Knowing someone has worked with HL7 interfaces is useful, but knowing they built HL7 Interfaces for inpatient order management with Epic tells you far more about the problems they can solve.

Over time, those small contributions build a searchable knowledge base of real engineering experience across the organization.

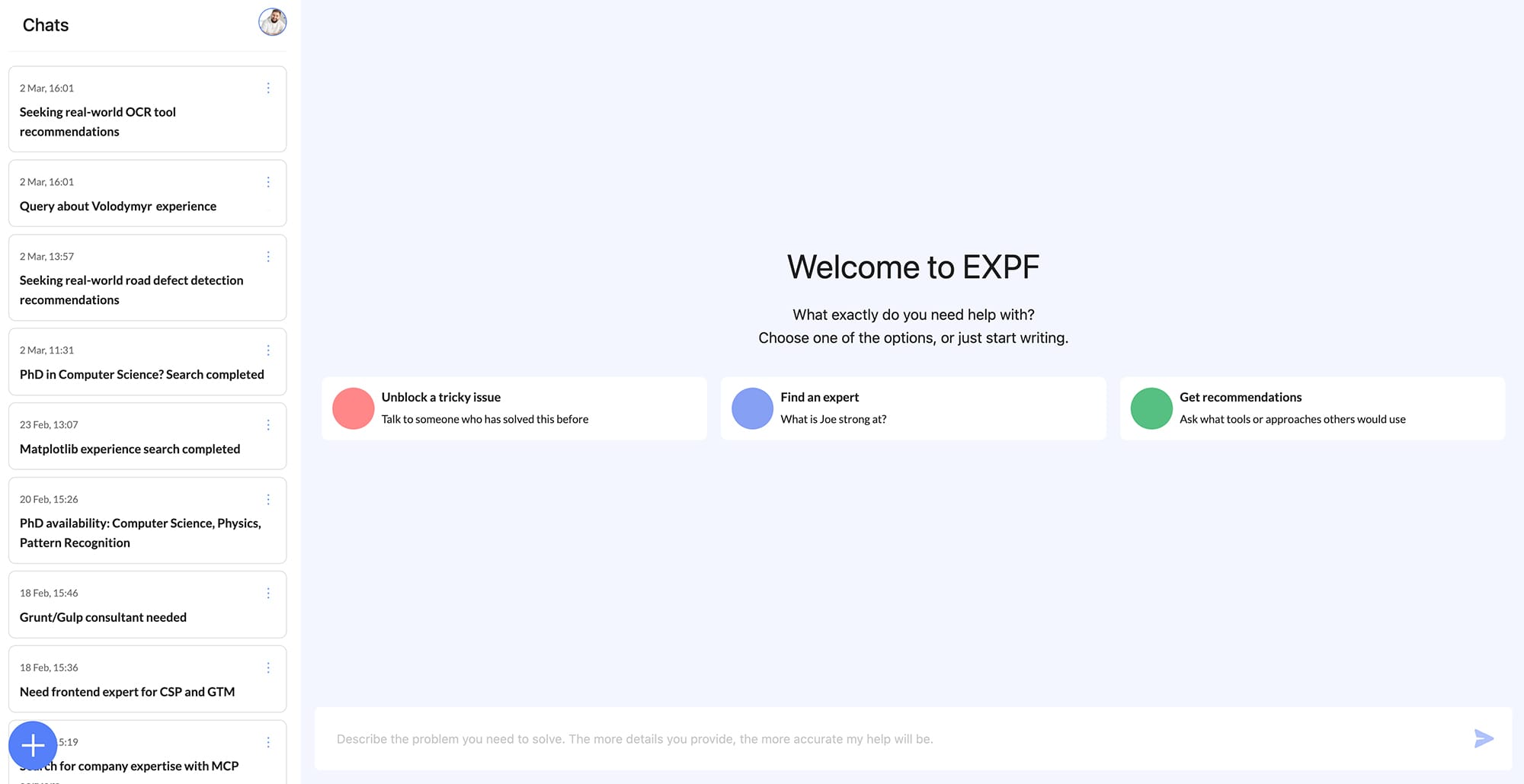

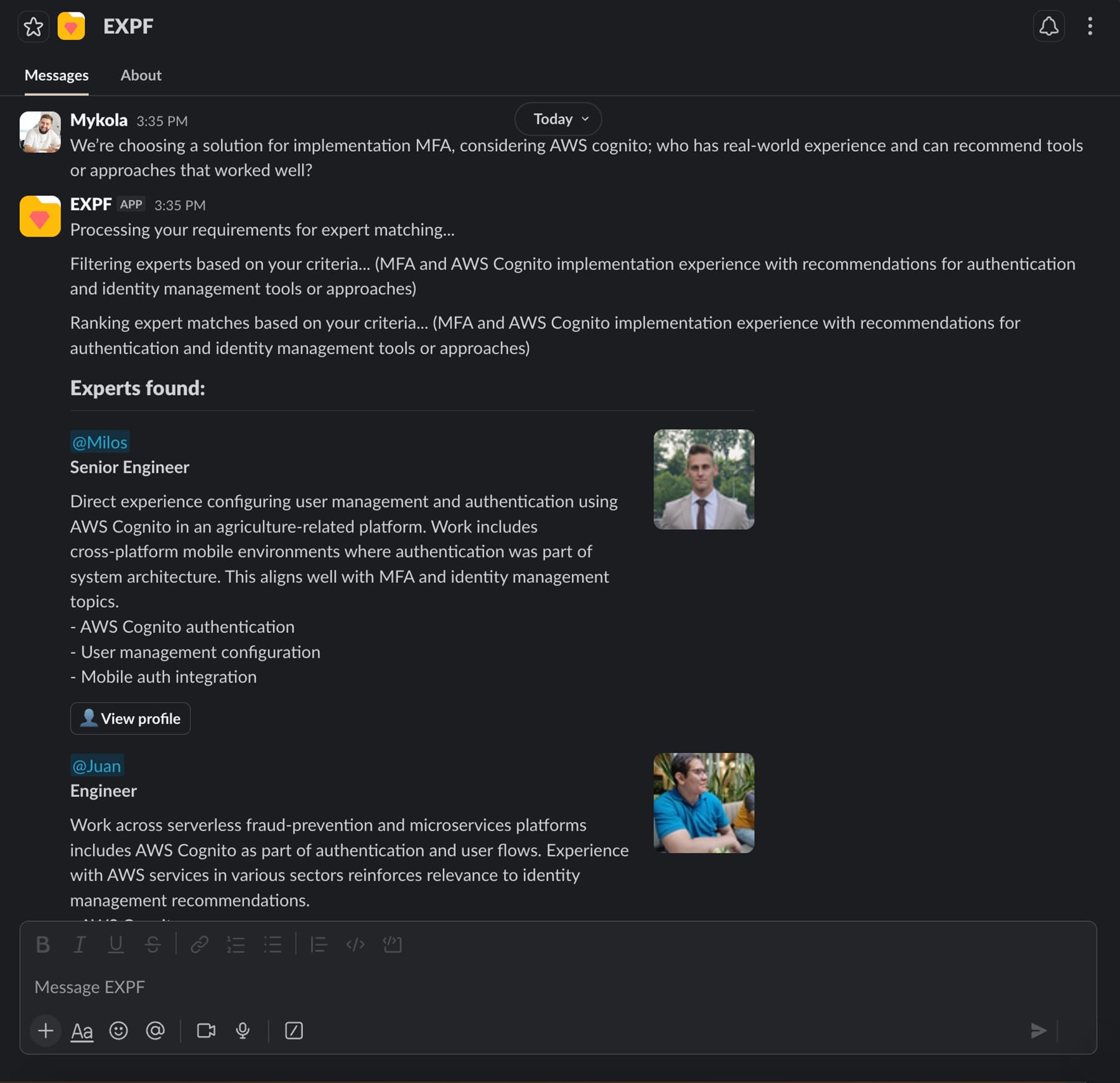

The product lives where the work already happens: a Slack chatbot with a Web version for deeper searches and profile management. When someone needs expertise, be it interview support, a second opinion, or a niche implementation detail, ExpertFinder surfaces strong matches based on real-world experience, not stale self-reported skills, through a conversational interface.

The key differentiator is how the system learns from real work. Rather than functioning as a traditional HR database, each contribution adds a small piece of context, gradually making expertise across the organization easier to discover and match.

A look under the hood of ExpertFinder

ExpertFinder combines conversational AI, automated evaluation, and effortless knowledge capture to make expertise discovery practical at scale. Here’s how those pieces work together.

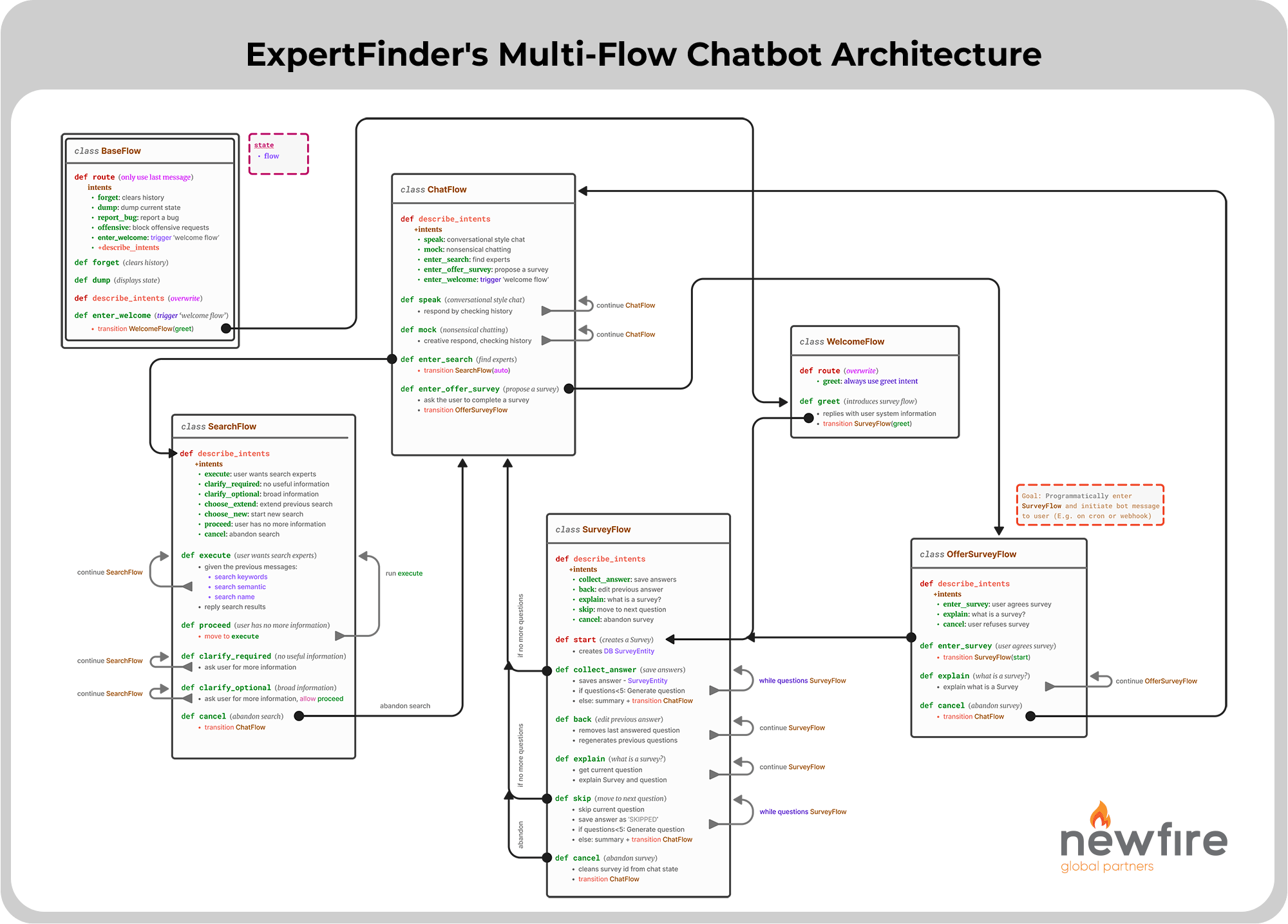

Multi-Flow Conversational Intelligence

Slack is not a clean UI. People change topics mid-thread. They ask vague questions. They reply hours later. They switch from “find someone” to “by the way, update my profile.”

We built ExpertFinder to address that reality rather than forcing users into rigid commands.

One of the core capabilities is multi-flow conversational intelligence: ExpertFinder can move between intents, search for experts, capture knowledge, and refine criteria, without resetting the conversation or losing context.

Under the hood, this required a deliberate conversation architecture (stateful flow management rather than a single prompt loop), plus guardrails to keep the experience stable as features expand.

When engineers design chatbots, they often fall into one of the two extremes: they either make the bot too sophisticated by giving the LLM too much freedom and creativity, causing frequent conversation drift from the user’s mental model, or they overfit user message parsing too much by requiring certain “magic keywords” to trigger actions.

Being the frustrated user myself (I hate when chatbots offer useless actions!), I aimed for a space in between: using LLM to narrow down its own freedom of choice and then letting it be creative within a limited set of dynamic constraints.

This is how we ended up building state-based intent flows: it’s like a state machine, but the transitions are augmented by data derived from the sliding window and the transient conversation state.

– Andrew, Software Architect on ExpertFinder

Continuous Quality Assurance Through Automated Testing

Building a conversational AI system that handles multiple flows introduces two distinct, but related challenges:

- How do you ensure that changes in one area don’t break functionality in another?

- How do you verify that prompt refinements improve the user experience without introducing regressions?

We addressed this through a rigorous, continuous quality assurance framework that leverages LangChain evaluators, integrated directly into our CI/CD pipeline. Every code change, every prompt adjustment, every new feature addition triggers a comprehensive suite of automated tests that validate chatbot behavior across all conversation flows.

Our evaluation framework tests multiple dimensions:

- Intent Recognition Accuracy: Does the system correctly identify what the user is trying to do across different phrasings and contexts?

- Response Quality: Are responses helpful, accurate, and appropriately formatted for the conversation flow?

- Flow Transitions: Can the system smoothly handle switches between different types of requests without losing context?

- Edge Cases: How does the system behave when users provide unexpected inputs, incomplete information, or contradictory requests?

- Cost Expenditures: How do changes (e.g., in prompts, model vendors) impact the monetary cost and token usage of the models?

This automated testing approach has proven valuable in practice. We can confidently iterate on the system knowing that regressions will be caught before they reach users. When we improve prompt engineering for one flow, we immediately see if it impacts others. When we add new features, we validate that existing functionality remains intact.

The testing data itself comes from both synthetic data and real user interactions, ensuring our evaluations reflect actual usage patterns rather than hypothetical scenarios. As ExpertFinder learns from more conversations, our test suite grows more comprehensive, creating a cycle of quality improvement.

From implementation experience, this evaluation framework is valuable because it creates a measurable engineering process for conversational AI changes. Fast, accessible testing gives developers room to iterate quickly without losing control, while surfacing latency and model-cost impact early in the release cycle.

The main challenge is evaluation design, not execution. Scenarios must reflect real behavior, quality criteria must be precise enough to align human and LLM judgments, and the suite must continuously expand beyond happy paths to remain representative.

In practice, the hardest failures are rarely obvious crashes; they are subtle shifts, like prompt overlap between similar intents, where responses sound correct but the assistant routes to the wrong flow. These issues often pass casual testing because everything appears fluent on the surface. Automated evaluation across longer, realistic conversation sequences is what stress-tests this behavior and makes the assistant truly reliable in real usage.

– Mario, Senior ML Engineer on ExpertFinder

Frictionless Knowledge Acquisition

Most knowledge base initiatives fail for a simple reason: they behave like homework. Long forms, rigid skill matrices, and one-time surveys might look structured, but they don’t survive real delivery pressure.

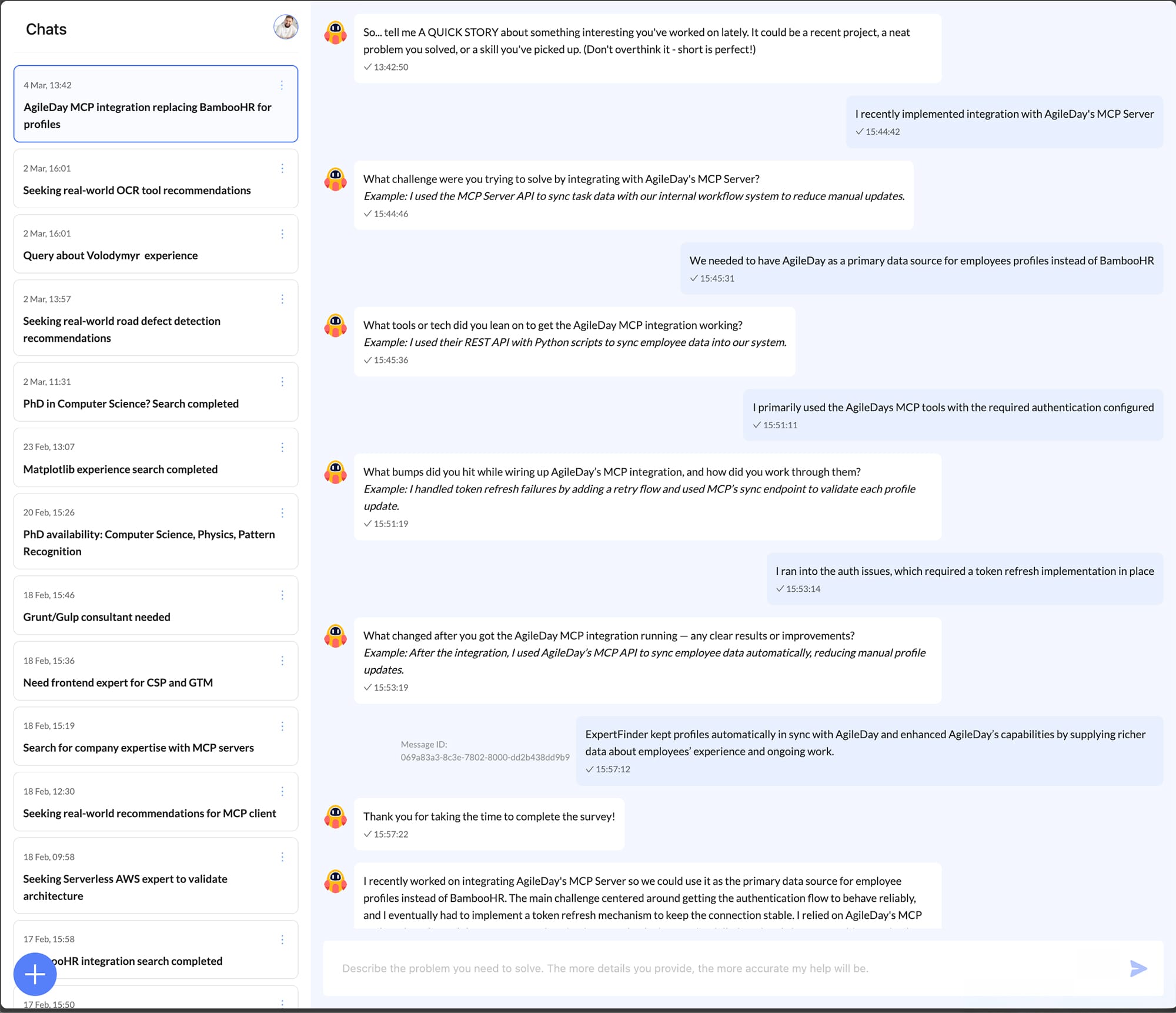

ExpertFinder takes a different route. We design knowledge capture as a set of lightweight, engaging micro-experiences: small interactions that fit naturally into a workday and make it easy for people to share what they’ve done. “Tell ExpertFinder a Story” is one example of that approach.

After a recent client project (or even a personal side project), ExpertFinder guides a person through five simple prompts designed to capture signal without demanding effort:

- What was done?

- Which problem did the action solve?

- Which tools/technologies were used?

- What proved challenging?

- What was the outcome?

Answers can be as short as a few words and given in the user’s preferred language. They can skip any question or stop halfway through, and it still works. The goal is a steady accumulation of real context with near-zero friction, even when the answers are not complete.

ExpertFinder then turns whatever input it receives into a short, structured summary. Over time, these small contributions compound into something traditional skills databases rarely achieve: a living picture of what people have actually built, under which constraints, and with what outcomes. That way, matching becomes smarter and more confident with every story shared.

But the work doesn’t end there. We’re continually developing additional “fun by design” ways for employees to share experience, interactions that feel natural, lightweight, and worth participating in, so the knowledge base grows through everyday momentum rather than mandate.

How ExpertFinder Serves Newfire Clients

ExpertFinder started as an internal initiative. But it reflects something bigger about how Newfire approaches AI.

We don’t build AI features for the sake of novelty. We apply it to real workflows, integrate it into daily operations, and make sure it works reliably in practice. ExpertFinder is one example of that mindset in action.

For Newfire’s clients, many of them digital health companies building complex platforms or modernizing their engineering organizations, projects often require highly specific experience. Teams need to make architectural decisions quickly, solve unfamiliar technical problems, and apply patterns that have already proven to work in production environments.

ExpertFinder helps Newfire surface that experience faster and more reliably. When a project requires niche knowledge, whether related to AI systems, interoperability, platform architecture, or large-scale product development, teams can quickly identify colleagues who have already solved similar problems in real production contexts, not just worked with the underlying technologies.

This allows projects to ramp up faster and reduces the risk of trial-and-error decision-making. Instead of searching for answers or rediscovering solutions, teams can draw directly on real implementation experience across the organization.

In complex environments such as U.S. healthcare technology, where timelines are tight and the cost of mistakes is high, that combination of speed and experience can make a meaningful difference in how projects are delivered.

From Internal Tool to Operational Capability

ExpertFinder began as a practical response to a challenge Newfire encountered as it scaled: how to make real engineering experience visible and reusable across a growing organization. What started as an internal tool has evolved into a system that continuously captures project knowledge, surfaces expertise when it’s needed, and helps teams make better decisions faster. More broadly, it reflects how Newfire approaches AI: not as a collection of experimental features, but as systems designed to support real work, integrate into everyday tools, and improve continuously through use.

If you’re interested in how engineering teams are applying AI to real-world workflows, subscribe to stay updated on new insights from the Newfire team.

The News(fire)

Curated insights delivered monthly to your inbox.