Raising Healthy and Productive Agents: A Framework for Intentional AI Growth

The concept of “Raising Healthy Agents” is a strategic framework for maximizing the Return on Investment (ROI) from modern AI systems. Just as dedicated care, environment, and education are essential for raising a productive child, developing high-performing, reliable AI agents capable of tackling complex, autonomous tasks requires a holistic approach to their development. We draw a parallel between the human endeavor of thoughtful parenting and the technical process of designing AI systems: both require intentional inputs, continuous learning loops, and moral guidance (philosophy and governance). This article explores the necessary environment, nourishment, guidance, and tools, grounded in sound philosophical concepts and hard scientific frameworks, that allow AI agents to learn, adapt, and thrive continuously.

The Ingredients for Healthy Growth

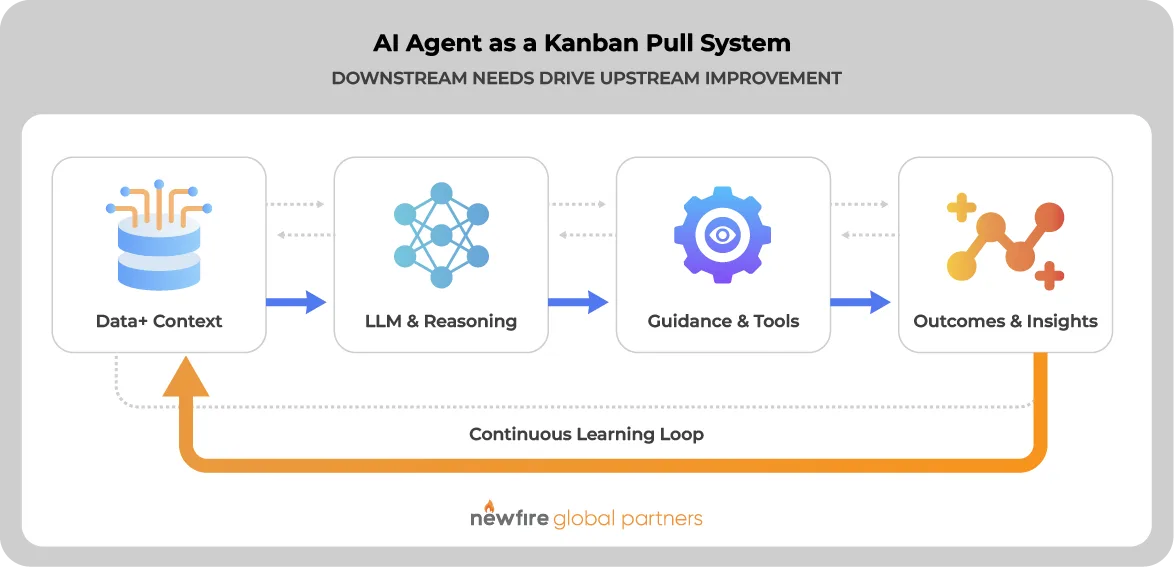

At the heart of agency is intentional action: doing things for a reason, with purpose, and learning from what happens next. To nurture this in AI, think of the agent as a dynamic factory:

- Raw materials (Data + Context): The raw data fed to the agent is essential, but insufficient. It needs rich, well-documented metadata, context, and dimensions, like the semantic layers and business terms in an organization, so the agent understands not just the “what” but the “why” and “how.”

- Processing engine (LLMs & Reasoning): The reasoning core, such as large language models (LLMs) and data warehouses, transforming raw inputs into decisions.

- Expertise (Guidance & Tools): Embedded domain knowledge, reference frameworks, and the guiding hand of human expertise.

Healthy agents thrive when these components work in a continuous feedback loop. Decisions lead to new insights, which refine future decisions. This loop works like a Kanban pull system, where downstream needs “pull” higher-quality inputs upstream, balancing demand and supply for smooth, adaptive performance.

Philosophical Roots to Keep in Mind

Just like good parenting rests on solid principles, healthy AI agents need to be grounded in sound philosophy. Without it, even technically sophisticated systems risk becoming brittle, misaligned, or operationally noisy.

Intentionality

Is an agent simply a system that acts? It could be argued it’s a system that acts for a reason. In practice, this means tying actions to explicit goals, constraints, and values. The more clearly those purposes are defined, the more reliably the agent can distinguish between activity and meaningful progress. Intentionality is what turns a model from a reactive mechanism into a system capable of contributing toward a business outcome.

Circular causality

For AI agents, every action changes the environment, and that changed environment shapes the next set of inputs, decisions, and behaviors. This circular relationship is essential to understanding why agent performance cannot be judged only at the point of output. A healthy agent must be designed with awareness that its choices influence the very conditions under which it will act again.

Autonomy and reflexivity

All too often, useful autonomy is taken to mean an absence of oversight. In fact, it is the capacity to act within a defined frame and improve through reflection. Reflexivity gives an agent the ability to examine the effects of its own behavior, compare results to intent, and adjust accordingly. This is what allows systems to learn from outcomes rather than simply repeat patterns. In practical terms, reflexivity requires feedback signals, measurable objectives, and mechanisms for adaptation.

Adaptation through directed evolution

High-performing agents improve through structured variation, evaluation, and selection. Like directed evolution in science, this process depends on testing strategies in context, learning from what performs well, and reinforcing what produces better outcomes. The key distinction is that this evolution is not random. It is guided by human judgment, governance, and business priorities, ensuring that improvement does not come at the expense of reliability, compliance, or trust.

These principles matter because healthy agency implies a disciplined relationship between action, feedback, and purpose. An agent becomes more useful and powerful by learning in context, adapting with direction, and remaining grounded in the goals and values that shape its decisions. In that sense, raising healthy AI agents means designing systems that do not just act autonomously, but improve responsibly over time.

Practical Tools and Frameworks for Raising AI Agents

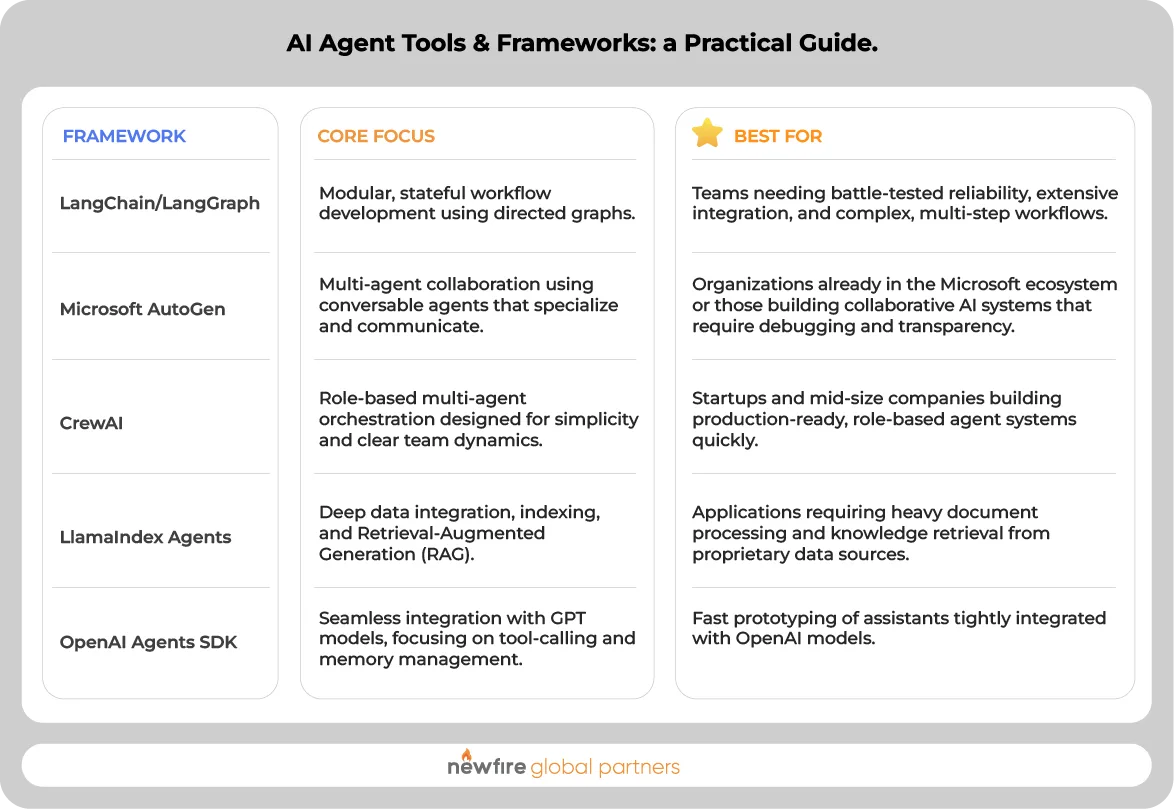

To actually build and nurture these AI agents, several mature frameworks are available, helping developers orchestrate the many parts of an agent (from memory and tool integrations to human oversight):

These tools provide the structure to implement sophisticated multi-agent architectures that mimic effective human teams, supporting long-term health and adaptability.

The Critical Role of Rich Metadata

Feeding an AI agent raw data without context is like sending a child to school with no textbooks or teachers.

For an analytics agent, having access only to data lakes or tables is severely insufficient. The agent must understand the semantic layers, business terms, roles, and organizational context. Without this rich documentation and dimensioning:

- Insights are Constrained: The agent’s insights will be shallow, potentially erroneous, and lack actionable depth.

- Decisions are Misaligned: Decisions made might be out of alignment with current business realities, organizational goals, or documented regulatory requirements.

- Governance Fails: Without clear documentation of business roles, permissions, and data lineage (which is part of the metadata layer), agents will be highly constrained and potentially violate compliance rules due to a lack of necessary context on who can access what and why.

Good metadata acts as nutrition for the agent’s cognitive development, giving it the conceptual grounding to interpret, reason, and make valuable decisions. Investing in clear documentation, up-to-date ontologies, and business glossaries is essential to raising AI agents that are both healthy and impactful.

Continuous Evolution: The Agent’s Learning Environment

For high ROI, the AI agent must exist within a robust ecosystem designed for growth. Agents will benefit and evolve faster if they are rooted in an environment of continuous learning, documentation, and measurement. This ensures that the agent’s performance is not static but constantly optimized against real-world objectives.

Crucially, analytics agents and the business requirements they are being used to meet should live in the same environment, ideally within a centralized governance layer that provides immediate access to context. This alignment means that any action performed by an analytics agent must be executed in the context of the question or outcome the action addresses, guaranteeing relevance and clear attribution. By linking every action back to a defined business purpose and measuring its impact, we create the perfect environment for directed evolution and maximum productivity.

Healthy Agents Are Designed, Not Discovered

Raising healthy and productive AI agents is a deliberate design challenge that combines rich data, contextual grounding, human expertise, feedback loops, and strong governance into a system that can learn with purpose.

The organizations that see the greatest ROI from AI will not be the ones that deploy agents fastest. They will be the ones that raise them best: with clear intent, structured environments, measurable feedback, and enough philosophical discipline to ensure that autonomy remains aligned with real-world goals. In that sense, healthy agents are less like software features and more like long-term capabilities. They require cultivation. Given the right inputs, the right learning environment, and the right moral and operational boundaries, they do more than automate tasks. They become adaptive systems that improve decision-making, strengthen execution, and create durable value over time.

The News(fire)

Curated insights delivered monthly to your inbox.