Shipping Knowledge: The Best Path to Better Decisions

Most organizations invest heavily in data, yet struggle to turn it into decisions that hold up in practice. Dashboards are built, pipelines are shipped, and models are deployed. But when it’s time to act, teams still lack clarity. Clearly, the issue isn’t access to data, but the absence of decision-ready knowledge.

This article introduces a practical operating model you can use to treat knowledge as a product: something that can be designed, measured, and reliably delivered to the people who need it most. If your teams are investing in data, analytics, or AI but not seeing consistent business impact, this framework will help you understand why and what to change.

The Core Mandate: Knowledge as a First-Class Product

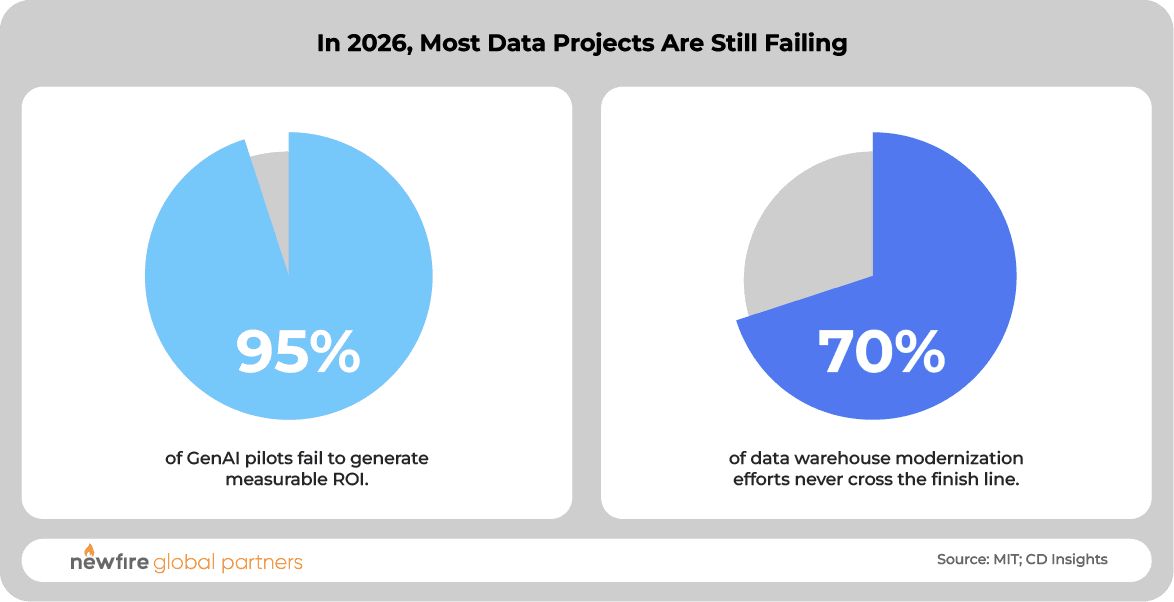

Despite years of investment, most data-driven initiatives still fail to deliver meaningful business impact. At least, that’s what recent evidence suggests. A 2025 MIT study on enterprise generative AI reports that roughly 95% of GenAI pilots fail to generate measurable profit and loss gains. Similarly, 2026 analyses of large‑scale data warehouse modernization efforts indicate that up to 70% of these projects either fail outright or significantly overrun budgets and timelines.

Illustration: Newfire Global Partners / data: MIT; CD Insights

The high failure rates seem to be a framing problem that stems from treating data as the objective, rather than as raw materials for knowledge understood as a product that must be manufactured, managed, and reliably delivered to decision-makers.

To achieve tangible, measurable Return on Investment (ROI), organizations must adopt the mindset that knowledge is a product, and apply the same rigor found in software engineering. In analytics engineering (the discipline of transforming raw data into something usable), this is called Shipping Knowledge.

From Data to Knowledge: The Operating Model

Most organizations treat data and knowledge as interchangeable. They are not: data is raw material while knowledge is decision-ready output, processed, validated, and shaped by domain expertise.

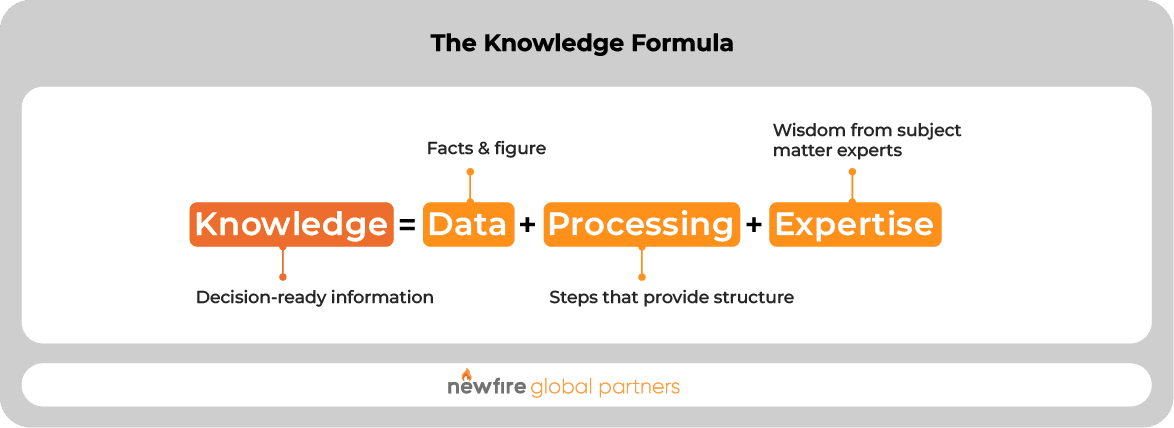

That relationship can be expressed in a straightforward way, using the Knowledge Formula:

Each component is necessary, and none is sufficient on its own:

- Processing transforms raw inputs into structured outputs through cleaning, modeling, and analysis.

- Expertise, provided by human subject matter experts, determines what matters: what questions to answer, how results are interpreted, and how knowledge is delivered.

When one of these elements is weak or missing, the result degrades. Data without processing is unusable. Processing without expertise produces technically correct but irrelevant outputs. Expertise without reliable data and systems does not scale.

Taken together, these components form a system. We refer to this conceptual operational model as the Knowledge Manufacturing Process (KMP). It governs how knowledge is produced and delivered. It is continuous, repeatable, and subject to the same constraints as any production system.

By viewing knowledge production as a continuous manufacturing process, we can analyze where we are constrained from doing more. The ‘machine’ that produces knowledge depends critically on the quality and availability of its three inputs: Data, Processing power, and Expertise. Any shortcoming acts as a constraint (or bottleneck) that limits overall output. In this model, professional management continuously monitors the entire KMP to identify and target the most restrictive constraint, allowing the organization to improve outcomes with the least effort and cost.

Five Core KMP Principles

High-performing teams do not treat knowledge work as exploratory or open-ended. They operate against a clear set of principles that align effort with measurable outcomes.

Five consistently show up in organizations that reliably turn data into decisions:

- Start with Business Opportunity: All work must begin by clearly defining the specific business problem, the decision to be supported, and the measurable outcome.

- Identify Required Knowledge: Shift focus from available data to the specific knowledge required by the decision-maker to directly answer critical questions.

- Be Clear on Value: Standardize and quantify the value of knowledge products based on tangible business outcomes (time savings, revenue uplift, risk reduction).

- Factor Cost into the Backlog: Require the cost of the entire knowledge lifecycle, the Total Cost of Knowledge (TCOK), to be estimated and tracked against the defined value in a forced-rank backlog.

- Design the Solution: Establish a disciplined, repeatable system for delivery that moves knowledge creation out of ad-hoc projects and into a predictable, measurable production line.

Individually, these principles improve clarity. Together, they shift knowledge work from reactive execution to disciplined production, where outcomes are predictable, measurable, and continuously improving.

Outcomes of Adopting a Knowledge Product Mindset

Treating knowledge as a product can drive measurable business performance, helping organizations move beyond fragmented outputs and begin to deliver consistent, decision-ready insights. The result is not just better data, but faster, more reliable execution across the business, expressed as:

- More Value & Opportunity Capture: By quantifying value upfront, every product delivers a measurable ROI, enabling the business to capitalize on time-sensitive opportunities.

- Timely & Faster Delivery: The KMP assembly-line approach reduces time-to-market, ensuring insights arrive in time to influence the decision before their value depreciates.

- Predictable, Scalable Efficiency: Standardizing the KMP reduces technical debt, eliminates costly rework, and lowers the long-term unit cost of maintaining high-quality knowledge.

- Mitigated Decision-Making Risk: Rigorous QA provides the necessary proof of correctness, reducing the risk of costly bad actions and unlocking knowledge for use.

Taken together, these effects compound, accelerating decision cycles, increasing confidence in execution, and reducing the cost of getting it wrong. This is the difference between generating data and delivering impact.

Why This Matters Now: The AI Amplifier

Structural issues in the knowledge production process become more visible and more costly in the context of AI.

Most organizations approach AI as a layer on top of existing data systems. In practice, those systems were never designed to produce reliable, decision-ready knowledge. As a result, AI initiatives often stall after initial pilots or fail to generate measurable impact.

The limitation is not the model but the quality and structure of the knowledge it depends on.

Without a system for consistently producing validated, decision-ready knowledge, AI cannot scale beyond experimentation. With it, AI becomes significantly more effective because it is grounded in outputs that are already aligned with business decisions.

Linking Knowledge to Business Value

Treating knowledge as a product requires the same financial discipline as any other business investment. Work is prioritized based on measurable impact (time saved, revenue generated, or risk reduced) and evaluated against the full cost of delivery, including data, processing, and expertise.

This shifts knowledge work from open-ended exploration to a managed portfolio of investments, where value and cost are continuously balanced and adjusted over time. Without this discipline, prioritization breaks down and impact becomes inconsistent.

Critical Attributes of a Knowledge Product

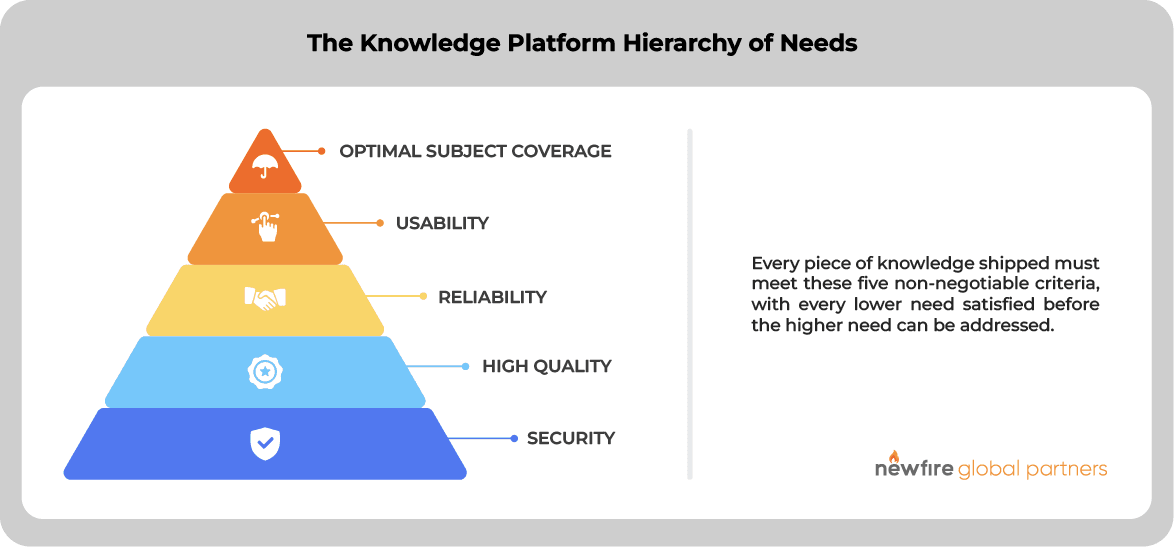

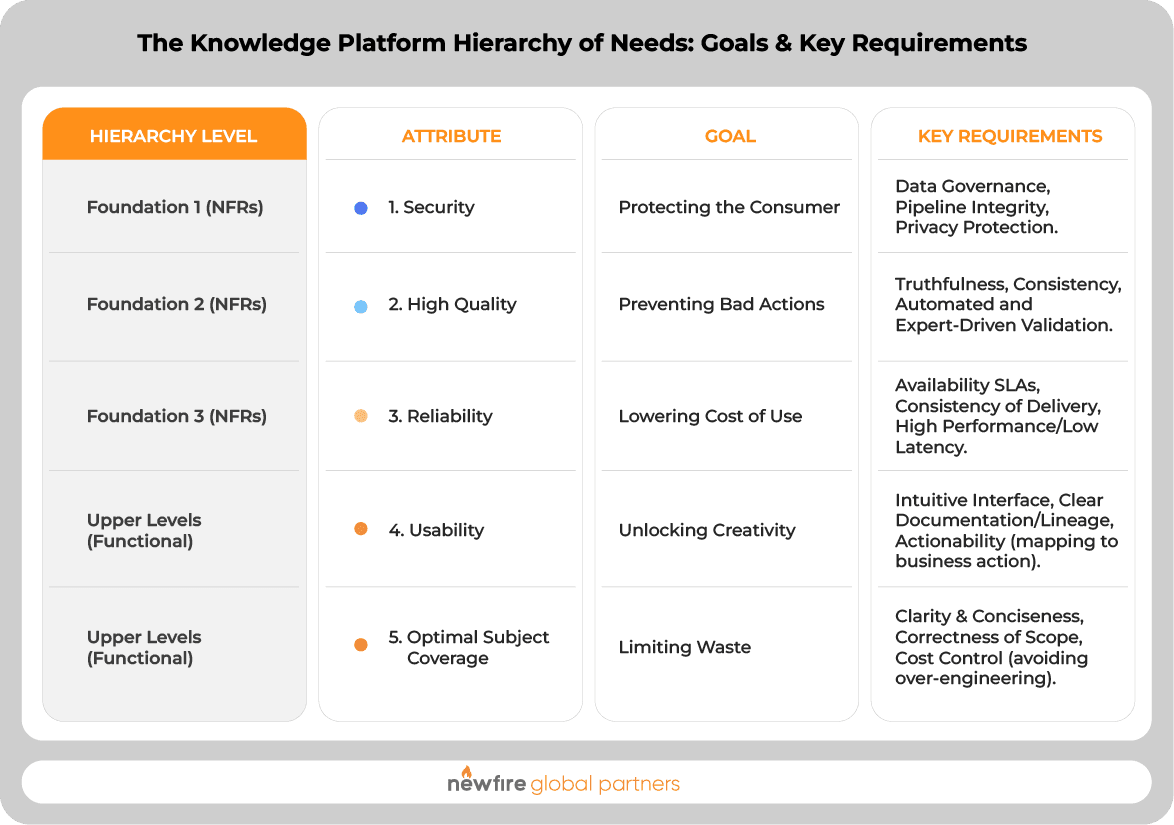

Not all knowledge is equally usable. For knowledge to reliably support decisions, it must meet a defined set of standards. These attributes form the Knowledge Platform Hierarchy of Needs.

Foundational requirements, security, quality, and reliability, must be satisfied first. Without them, higher-level capabilities like usability and completeness do not matter, because the output cannot be trusted or consistently used.

These attributes are baseline requirements for any knowledge product intended to influence real decisions. Organizations that ignore the foundation often invest heavily in usability or coverage, only to find that outputs are not trusted or adopted. Those that enforce the hierarchy build systems where knowledge is reliable, actionable, and consistently used.

The Knowledge Manufacturing Process: Execution (PPT)

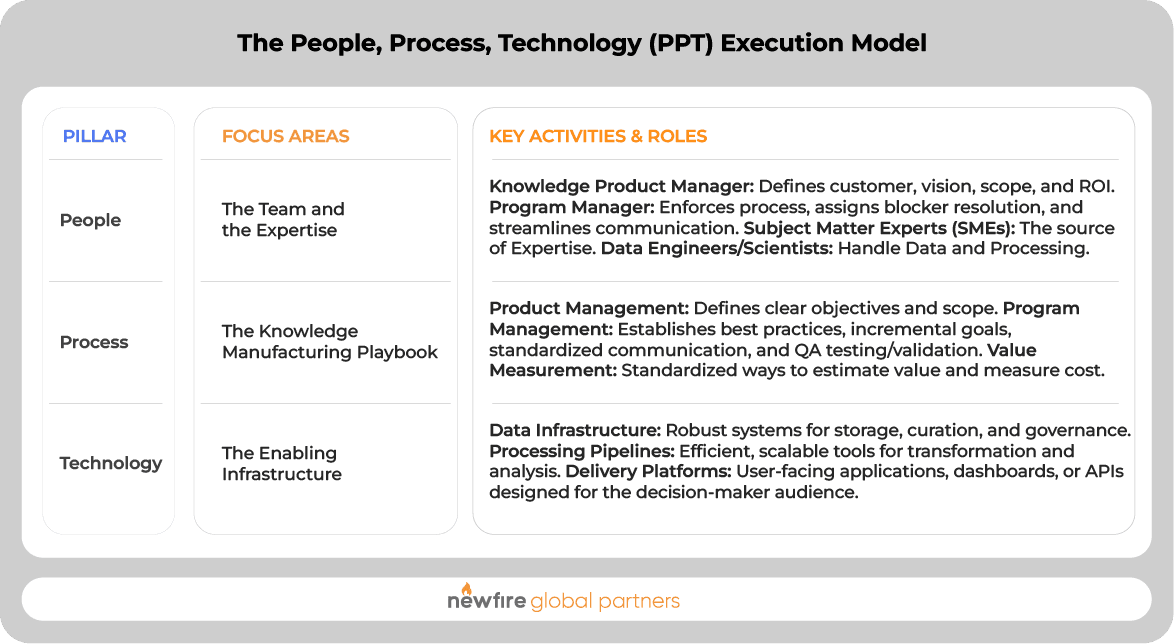

Execution requires more than tools or isolated improvements. It depends on a coordinated system across people, process, and technology (PPT). These three pillars define how knowledge is consistently produced and delivered.

In practice, this model translates into the following structure:

The Knowledge Manufacturing Playbook: Seven Core Steps

Adopting the Knowledge Product mindset means following this disciplined, seven-step playbook for every significant initiative:

- Start with Clear Objectives: Identify the customer, their needs, and the decision you are enabling.

- Be Disciplined with Scope: Define what will and will not be done to maintain focus. Secure committed executive sponsorship.

- Evaluate Current State Objectively: Assess current knowledge production against the Critical Attributes checklist to find gaps and identify potential constraints.

- Create a Vision of Future State: Quantify the desired capability: Who will be unblocked and what is the bottom-line impact?

- Craft a Solution: Design a solution that fits the PPT and budget constraints, quantifying the gap between current and future state.

- Create an ROI-Driven Backlog: Estimate value and measure cost to calculate ROI. Adjust priority based on stakeholder alignment and uncertainty (which magnifies risk), prioritizing features that address the most severe constraints.

- Build Effective Program Management: Define roles and responsibilities, use incremental goals, assign clear blocker resolution ownership, and establish rigorous QA and validation processes.

Execution is where most data initiatives break down, not for lack of capability, but for lack of coordination. When people, process, and technology are aligned around a clear model, knowledge production becomes consistent, measurable, and scalable. Without that alignment, even well-funded efforts struggle to deliver sustained impact.

From Data to Decisions That Hold Up

Most organizations are not short on data, tools, or investment. What’s missing is a reliable system for turning those inputs into knowledge that can be used with confidence. Treating knowledge as a product changes that. It introduces structure, accountability, and discipline into how insights are produced and delivered, shifting the focus from outputs to outcomes.

Done well, this can lead to a different operating model: one where decisions are faster, execution is more predictable, and investments in data and AI translate into measurable business impact. This is what allows organizations to move from experimentation to sustained performance.

The News(fire)

Curated insights delivered monthly to your inbox.